This is the second guest post by Professor Robin Lovell-Badge, who is head of the division of Stem Cell Biology and Developmental Genetics at the Medical Research Council National Institute for Medical Research in London. After an earlier post which debunked myths about the nine-out-of-ten drug failure rate, Prof. Lovell-Badge has taken on the claim that “only 0.004% of all animal experimentation is of any direct benefit to human health”. In this post Prof Lovell-Badge explains how this statistic was derived, and why the claim is not supported by the evidence. This post is also appearing on other websites including www.understandinganimalresearch.org.uk.

—

A new statistic is doing the rounds in the animal rights camps. It asserts that “only 0.004 per cent of all animal experimentation is of any direct benefit to human health”. A damning claim if it were true.

The claim originates from a 2003 comment article by William Crowley who was commenting on a paper from Contopoulos-Ioannidis, Ntzani and Ioannidis. Let us look at both.

Contopoulos-Ioannidis, Ntzani and Ioannidis – Translation of Highly Promising Basic Science Research in Clinical Applications, 2003

In this paper, the authors screened all articles published between 1979-83 in six highly cited basic science journals for the words: therapy, therapies, therapeutic, therapeutical, prevention, preventative, vaccine, vaccines, or clinical. From these they retained all those which suggested there might be a future clinical application.

“[They] only considered technologies that were still at an experimental stage (molecular, cellular, animal, and early non-random humanized studies) that did not have prior application on humans for a specific promise”.

And

“[They] excluded articles that did not describe a clear clinical promise in the abstract; editorials; commentaries; reviews; news articles; articles that focused on mechanism of action, pathophysiology, or diagnosis; and articles on agricultural or veterinary applications”.

Conclusions:

- 25,190 papers were screened.

- 562 included the words mentioned above (therapy, therapeutic… etc.)

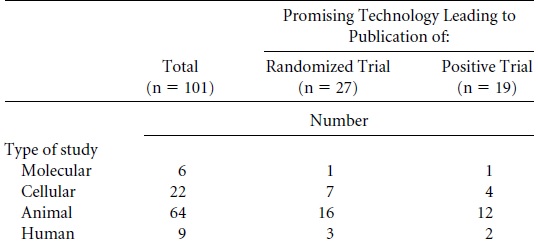

- 101 suggested future clinical application (and thus were further investigated)

- 27 promising technologies have resulted in at least one published trial (by October 2002).

- 19 have one published positive trial.

- 5 technologies were licensed, 4 more have shown limited clinical use

- 1 has shown extensive clinical advantages (angiotensin-converting enzyme inhibitors).

That only 27 of the 101 papers led to a clinical trial is not a surprise, as the results reported in these papers would have been very early stage findings, and many would have been weeded out in subsequent basic research and pre-clinical evaluation before ever getting to human clinical trials.

If we look at the types of studies which resulted in positive trials, we find that of the 19 out of 101 papers that lead to a positive trial (18.8%), the rate was the same for animal studies (12 out of 64, which also equals 18.8%) as for non-animal methods (7 out of 37, which is 18.9%).

Furthermore, in concluding the authors make the telling observation that:

“[B]asic research often leads to subsequent clinical breakthroughs simply by answering fundamental questions instead of targeting specific clinical problems”.

In other words, because Watson and Crick didn’t mention future clinical applicability in their seminal 1953 paper, the screening process used by Contopoulos-Ioannidis et al could not have picked it up had they chosen the year 1953. However, this doesn’t mean there wasn’t future medical applicability. Among a huge host of advances, our understanding of DNA structure has been essential to our understanding of cancer, without which we would not have most of our modern treatments.

Crowley’s comment piece mentions several papers related to the cloning of growth hormones and cytokines which were missed by the original authors’ algorithm, but have still led to trials and successful medical treatments. In Crowley’s words:

“[T]he algorithm used failed to unearth several key articles related to the cloning of growth hormone and cytokines. Not only did their algorithm miss these articles in the very journals they searched, but the proteins described therein have led to successful clinical trials and the subsequent development of therapeutic agents”.

A further flaw is that the analysis only looks at the 20 years after publication date. While they mention that a rotavirus vaccine was withdrawn, they could not know (due to it happening after the article was published) that 2 vaccines have been approved for the rotavirus since –based on the bovine rotavirus research reported in the original paper. Similarly they mention the drug Eflornithine:

Eflornithine (difluoronethylornithine) may be used to treat trypanosomiasis on special request, but the drug has only been tested in nonrandomized studies for this indication.

Following successful clinical trials, Eflornithine is nowlicensed to treat Human African trypanosomiasis (sleeping sickness) for which it is an important therapy and it is now also being evaluated in clinical trials in combination with the drug Nifurtimox. These two therapies effectively triple the “extensive clinical advantages” success rate. How many other therapies based on the 101 selected papers that were in preclinical development or early clinical trials at the time when Contopoulos-Ioannidis et al. wrote their paper later went on to clinical success is not known.

Crowley – Translation of Basic Research into Useful Treatments: How Often Does It Occur? 2003

The most relevant part of Crowley’s article is contained in a single sentence:

“Of the 25,000 articles searched, about 500 (2%) contained some potential claim to future applicability in humans, about 100 (0.4%) resulted in a clinical trial, and, according to the authors, only 1 (0.004%) led to the development of a clinically useful class of drugs (angiotensin-converting enzyme inhibitors) in the 30 years following their publication of the basic science finding”.

This one sentence contains at least 4 errors.

First off, Crowley has misread the paper when stating 100 had a clinical trial. 101 papers were assessed to see if they had a clinical trial, but only 27 did. Secondly, it does not make sense to make percentages out of the original 25,190 papers when 99.6% of these were screened out and not investigated for clinical trials. The 25,089 papers that were not examined could have led to 10, 100 or 1,000 successful therapies, but we simply don’t know because they never looked. Thirdly, to say that only 1 led to the development of a clinically useful class of drugs is also incorrect, since we have found that at least 7 led to licensed drugs that proved useful in the clinic, of which at least 2 (angiotensin-converting enzyme inhibitors and rotavirus vaccine) have extensive clinical advantages. So, of the 27 which had trials, 7 (26%) led to the development of a medical application. Finally, to say a “basic science finding” in reference to the starting pool of 25,190 papers was also incorrect, since while many will have reported basic science findings, this group of papers will also have included review articles, applied and translational science papers, commentaries, editorials and clinical trial reports.

In short, strict screening methods meant that 99.6% of papers were ignored (including all those looking at diagnosis of human conditions, and all veterinary research), leaving a sample size of 101. There was no evidence in the original article that the remaining 25,089 papers resulted in no future medical benefits (they simply were not checked). Of the 101 analysed papers (those which were likely to be looking at future benefit), 27 (26.7%) had trials. 7 (6.9%) resulted in a licensed application and 2 (2%) resulted in a widely used treatment.

Of course just because Contopoulos-Ioannidis et al. only found clinical trials for 27 of the 101 papers they examined does not mean that clinical trials of therapies based on any of the other 74 papers did not take place subsequent to the publication of their study, we have seen that this happened at least twice in the group of 27 papers that they focused on. Unfortunately since they don’t give any details about these 74 papers it is impossible to determine how often this happened.

The Claim:

Finally, let us remind ourselves of the claim:

“[O]nly 0.004 per cent of all animal experimentation is of any direct benefit to human health”.

The evidence for this claim that we discuss above does not support such a conclusion. As we saw, the research it is based on includes all manner of research, animal and non-animal (both of which showed the same rate of success in trials that were assessed) – so to make any judgements about animal experiments in particular is unfounded. The claim also assumes that only research that purports to have clinical application (and includes one of four words, or derivations thereof) can have clinical application; however, Crowley points out examples of successful treatments which originated from papers not mentioning the specific words the original authors screened for.

So the claim that the animal rights activists are making is a misrepresentation of an incorrect interpretation of a study that already had very serious limitations.

In essence, the original statement made by those opposed to animal research is not just inaccurate, it is meaningless.

Professor Robin Lovell-Badge

Head of the Division of Stem Cell Biology and Developmental Genetics, MRC National Institute for Medical Research, London

Animal extremists take every opportunity to paint their opponents in the corner. They do it with research, agriculture, transportation , in short with any animal use industries. Your article is important because the reader understands the tactics used to discredit valuable efforts by scientists and society that funds and supports such initiatives.We all need medical progress and animal research helps us get there.