Dr. Paul Tar is a research associate at the University of Manchester. In this guest post Paul explains the interdisciplinary journey taken between his PhD, in the analysis of planetary images, and current research post, in cancer studies. In this post he discusses how machine learning used to make measurements in space can also be used to improve the way we measure tumours in mice. If you are interested in writing about your science, we’d love to hear from you.

Dr. Paul Tar is a research associate at the University of Manchester. In this guest post Paul explains the interdisciplinary journey taken between his PhD, in the analysis of planetary images, and current research post, in cancer studies. In this post he discusses how machine learning used to make measurements in space can also be used to improve the way we measure tumours in mice. If you are interested in writing about your science, we’d love to hear from you.

Starting in Space

After several years of teaching computing in a college of further education, I made the move to research in late 2009. I wanted to start analysing pictures of the planets using computer vision. The end goal was to either take up a career in planetary science, or work for a private space company. Now, 9 years later, pictures of planets have given way to pictures of rat brains and mice with tumors – not exactly what I had planned – but the same fundamental ideas are used, and indeed are necessary to answer new questions in other fields. So how did I end up working on them?

As for analysing pictures of planets, my PhD was on using machine learning in the analysis of Martian imagery under the supervision of Dr Neil Thackery at the University of Manchester.

During this time some strict scientific criteria had to be laid out and followed. A new statistical method needed to be created to fulfil them, and the whole thing needed to be written, tested, then ultimately used for something useful. The end result was a new machine learning approach that we call Linear Poisson Modelling. As things turned out, the approach was well suited to many other applications outside of planetary science.

Mission to Mars

The best way to do science is to do it quantitatively. This means to use more than just descriptions of things using words, but to specify things using mathematics, statistics and real measurements. What we wanted to be able to do with planetary images was to make measurements of the length of river channels, the number of impact craters in different regions, and the surface area of terrains of different textures.

The aim was to build a computer programme that could use machine learning to make these measurements for us based on the images we fed into it. In order to do this, we needed to develop methods that allowed a computer to learn the appearance of objects of interest and spot them in new images. Importantly, we needed a way to understand the uncertainties in measurements made using the system, as understanding sources of error are important in science.

Images – particularly those taken with telescopes millions of kilometres away – contain both signal and noise. Signal might be certain textures (collections of pixels) that indicate the object you are searching for (e.g. a river or crater), while noise might be confounding random contributions to textures that make reading the signals hard (e.g. pixels in images with slightly the wrong values because of electrical fluctuations in equipment).

So, given an image of a Martian dune field, to measure the surface area covered by dunes we needed a way to learn what dunes looked like, learn how their appearance could vary, and understand how noise could affect the precision of the measurements. We decided to express the patterns of pixels within Martian images as histograms (type of bar chart). Some textures would contain a greater number of some pixel pattern than another, some would share a few patterns here and there, or some would have unique patterns only seen in that texture. The relative number of different pixel patterns could also change with things like orientation, or size, or the density of the surface texture. Noise within the images meant that counts of patterns were never exact. So, Linear Poisson Modelling was developed to describe the shape of histograms, describe how they were allowed to change, and predict how noise in counting affected final measurements made using them.

Our first publication covered the theory, which took several years to develop. Our second publication showed how we could learn the appearance of different types of Martian landscapes and then estimate how much of each type was present within an image. Our third publication used the method in a slightly modified way to help the citizen science project Moon Zoo to identify lunar impact craters.

Back to Earth

In our quest to show that our new machine learning method had more general applicability, we applied it to mass spectra and medical images. In mass spectrometry, a way chemicals within a sample can be measured is by zapping them with a laser, accelerating them in a magnetic field, and then counting the number of bits that reach a detector in different time periods. With the help of co-investigator Dr Adam McMahon and his PhD student, we used the method to show that we could learn the histogram shapes created by different biological samples and measure the proportions of those samples up to twice as precisely as some conventional alternative methods.

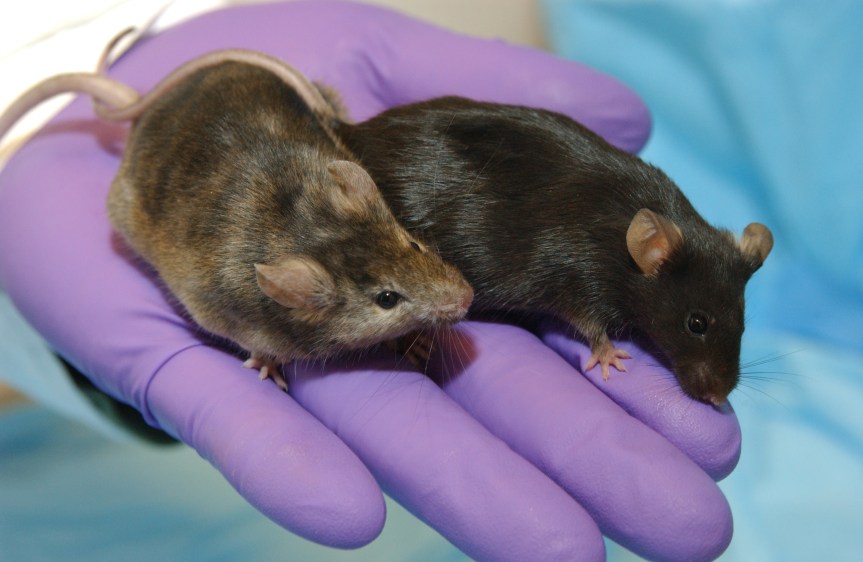

Medical images can also be turned into histograms by counting how many times different pixels fall into different ranges of values, as some types of tissue appear brighter or darker than others. We worked with Dr James O’Connor, Head of Imaging within the Manchester Cancer Research Centre, on a project using lab mice with implanted tumours. We used Linear Poisson Modelling and medical imaging to learn how tumours developed with and without treatment. We then measured the size of the effect that treatment had on the tumours in comparison to those in the untreated, control group. We showed that we could quadruple the precision of these measurements of change in comparison to conventional measurement approaches.

From Mars to Mice

There are also benefits to be gained by improving the ability to make quantitative measurements for scientific research, especially within medical applications. When I started my PhD, I did not intend to get involved in animal testing. In fact, it is something that I really didn’t want to get involved in. I was much happier looking at dead rocks in space. However, animal testing does happen and the data produced needs to be analysed. Ethically, it is important that we do not use animals unnecessarily; therefore we should make efforts to get the maximum amount of data possible from the animals used. Where possible, and without compromising the scientific aims, we should reduce the use of animals.

Reducing the number of animals used in medical studies can be difficult. Remember that measurements are noisy and uncertain. In general, the fewer animals used in a study, the nosier the measurements become. In some studies, researchers may use many more animals to increase their precision and decrease the uncertainty in their findings. Critics of “the 3Rs” may rightly point out that using too few animals is actually unethical, because the results can be so uncertain as to make the research pointless. So, any method of reducing animal usage without reducing precision is very important. The “square-root dependency” rule suggests that if you want to double your precision you have to quadruple your sample size. If you want to quadruple your precision, you have to use 16 times as many animals.

Yet, there is another way to improve measurements without increasing animal numbers. We have shown with Linear Poisson Modelling that we can already quadruple the precision of some tumor change measurements without increasing the number of animals used. This quadrupling is equivalent to increasing the sample size by a factor of 16. We can do with one mouse what previously would have taken 16. We can do with 10 mice what previously would have required 160! We’re still using mice, but potentially far fewer. This is better for the animals, cheaper for those who pay for it, and it provides the opportunity to make measurements of small effects that previously were too small to observe that might be important for personalised medicine or advances in cancer treatment.

Paul Tar

The University of Manchester